If you're a software engineer, you already know how to build systems that survive change. You swap database drivers without rewriting queries. You switch cloud providers without rebuilding your application. You update dependencies through a config file, not a code rewrite. This is just good architecture—separating what changes (implementation details) from what stays stable (your application logic).

Inside traditional robotics development workflows, hardware choices feel more permanent. Changing a camera often means rewriting vision processing code. Upgrading a motor controller turns into rebuilding motion logic. Swap sensors, and you need to refactor integration layers. Hardware becomes outdated architecture—and that's the problem.

Hardware agnosticism offers a different approach. It's the idea that hardware should be the replaceable layer, not the permanent foundation of your robotic system. In practice, it means that your robot's intelligence—its perception, decision-making, and behavior— can outlive any individual component.

For software engineers exploring robotics, this isn't a new concept. It's the same abstraction principles you already know, applied to physical devices. Applied to robotics, it fundamentally changes what's possible to build.

The problem: when hardware becomes architecture

Here's the pattern most robotics development follows:

You choose a camera and write vision code specifically for that camera's SDK. You pick a motor controller and build motion logic around that vendor's protocol. You select sensors and integrate using vendor-specific drivers.

This is like writing database queries directly in your application code instead of using an ORM. Or building AWS-specific logic throughout your app instead of using cloud-agnostic abstractions. You can do it—but you're creating tight coupling that'll hurt later.

The consequences compound quickly:

- Hardware changes require cascading software rewrites

- Component upgrades become full system redesigns

- Technical debt accumulates with each hardware-specific integration

- Teams get locked into vendors not by choice, but by code dependency

This happens because there's no abstraction layer between hardware and application logic—a problem software engineering solved decades ago with interfaces, ORMs, and standardized APIs.

Hardware longevity: what it means and why it matters

Building robots that outlive their parts is about architectural resilience. Your robot's intelligence—its perception, decision-making, and behavior—should be the durable layer. Hardware should be the replaceable layer. The system you’re building on should be able to absorb hardware changes without cascading rewrites.

Consider the difference in architectural structures:

Rigid, tightly coupled: Hardware → Vendor SDK → Application Logic

Resilient, loosely coupled: Hardware → Standardized Interface → Application Logic

This should feel familiar. It's the same principle you apply when you:

- Use database interfaces (JDBC, SQLAlchemy) instead of vendor-specific drivers

- Use HTTP/REST instead of writing raw TCP/IP

- Use cloud abstraction layers instead of AWS/GCP-specific code

Real-world example: Same logic, better hardware

When we set out to build the SurfaceAI fiberglass sanding solution, we started with a popular off-the-shelf depth camera. Mounted to the robot arm, it scanned boat surfaces to create 3D models for sanding plans. But as we pushed toward production-quality sanding, we discovered its limitations: noisy point clouds, poor performance beyond 50cm distance, and susceptibility to surface glare—all critical issues when scanning the complex curves of fiberglass boat parts.

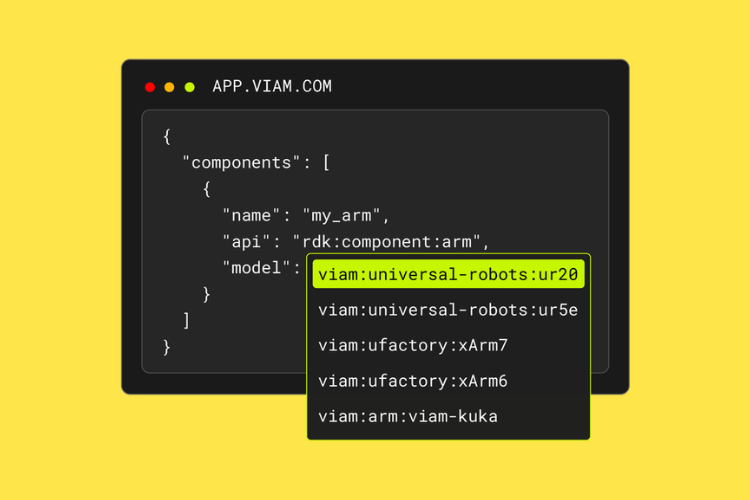

In traditional robotics development, this would have forced us to make a difficult choice: make do with suboptimal hardware, or spend weeks rewriting drivers and control logic to switch cameras. With Viam's hardware abstraction layer, we had a third option: evaluate alternatives without touching application code.

We identified the Orbbec Astra 2 as a promising candidate and ran side-by-side tests with both cameras. The results were dramatic. At 80cm distance—critical for capturing larger surface areas in fewer scans—the Astra 2 delivered smoother, more accurate point clouds, and produced less noise than the original camera. Most importantly for our reflective fiberglass surfaces, the Astra 2's depth sensing remained unaffected by glare that created large holes in the original camera's point cloud.

Here's what didn't change: The perception code. The sanding logic. The motion planning algorithms. All of the control logic and planning algorithms we wrote with the first depth camera worked out of the box with the Orbbec because they interacted with Viam's camera API that both cameras implement.

This rapid testing and data-driven decision-making would've been impossible if we'd been locked into our initial camera choice by integration complexity. Instead, we made the switch and moved on to the next challenge.